Day 21:Config Management: Implementing YAML-Based System Settings

Table of Contents

1. The “Global Constants File” Trap

Every junior quant engineer writes one. You’ve seen it. You’ve written it.

# config.py

MAX_POSITION_SIZE = 100

RISK_LIMIT = 0.02

ALPACA_API_KEY = "PK3X7V..."

PAPER_TRADING = True

It works on your laptop. It works in your backtest. It fails in production in ways that don’t produce errors — they produce losses.

The failure isn’t a crash. It’s a silent drift. The risk limit that was 0.02 in testing becomes 0.2 because someone did a find-replace. The API key rotates and now lives in two places. You deploy to a new server, forget to update the constants file, and spend 20 minutes wondering why positions are capped at 10 shares instead of 1000. Worst of all: you’re running paper and live off the same config, separated by a single boolean you forgot to flip.

This is not a style problem. This is a system reliability problem that directly maps to operational risk.

2. The Failure Mode: Three Paths to Account Ruin

2.1 Path 1: Type Coercion Corruption

YAML is not Python. When you write:

risk_per_trade: .02

max_drawdown: 5%

slippage_bps: 3

yaml.safe_load() gives you {'risk_per_trade': 0.02, 'max_drawdown': '5%', 'slippage_bps': 3}. Notice max_drawdown is a string. Your downstream if portfolio_dd > config['max_drawdown'] comparison silently evaluates to False in Python (comparing float to string raises TypeError in Python 3, but an unhandled exception mid-session is just as bad). You blow through your drawdown limit because the guard never triggers.

2.2 Path 2: No Environment Isolation

A single-file config that’s shared between paper and live is a loaded gun. The moment you want to test a new strategy, you copy-paste the live config and edit it. Now you have two files that look the same but diverge over weeks. A merge conflict resolves the wrong way. Your paper-trading position_multiplier: 0.1 gets written into your live config.

2.3 Path 3: No Hot-Reload = Missed Risk Windows

Markets move. Risk parameters need to move with them. If your system requires a restart to update a position limit during a volatility spike, you’re dead. The 30-second restart window during a flash crash is where drawdown happens. Your config system needs to respond to file changes while the event loop is running.

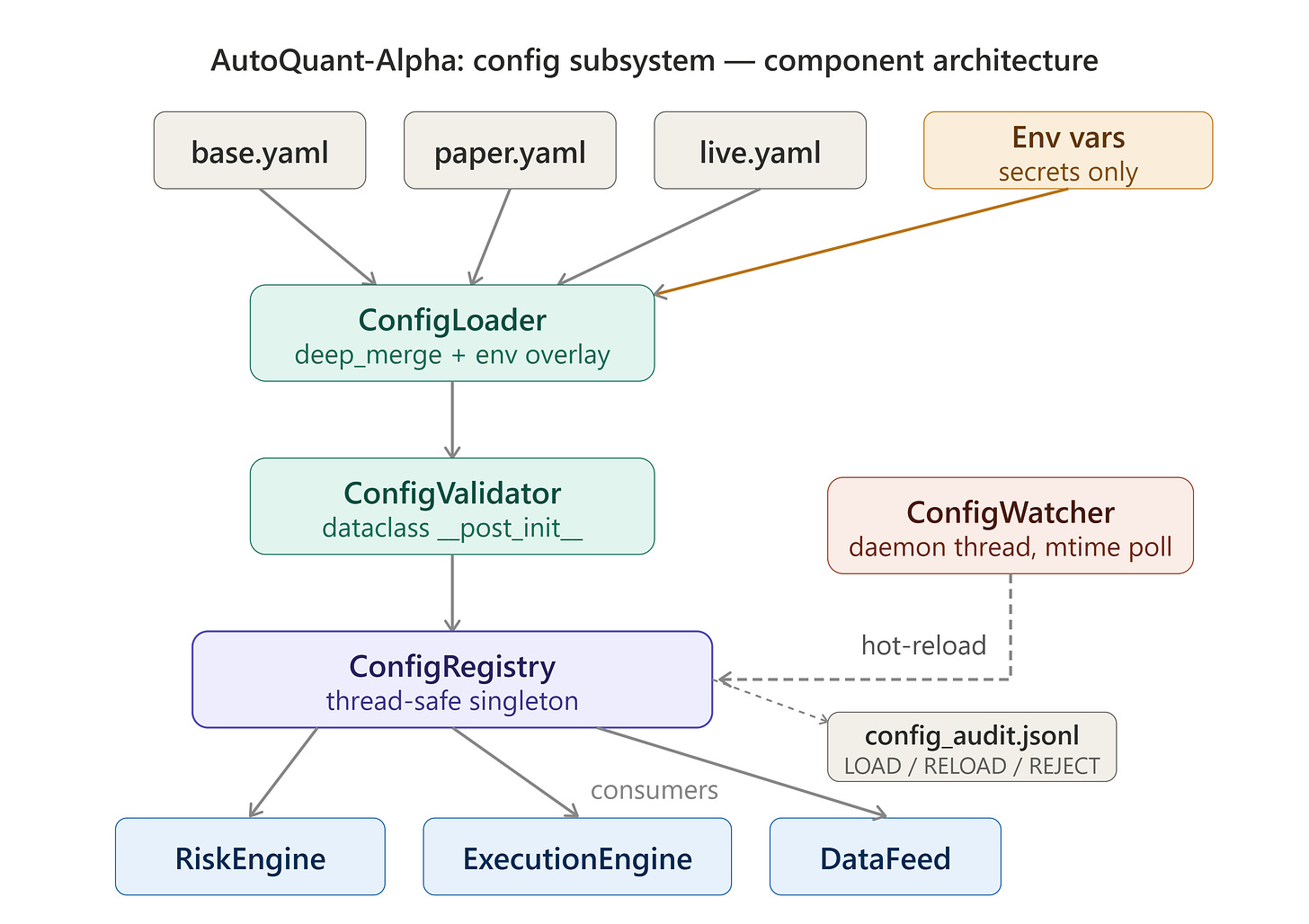

3. The AutoQuant-Alpha Architecture: Layered Config with Validation

We implement a three-layer config system based on the 12-factor app methodology, adapted for trading systems:

Layer 1: base.yaml — System-wide defaults. Never changes per deployment.

Layer 2: {ENV}.yaml — Environment overrides (paper, live, backtest).

Layer 3: Environment Vars — Secrets and runtime overrides. NEVER in YAML files.

The merge order is explicit: each layer overrides the previous. The final config is a single validated dataclass, not a raw dict. Consumers never touch raw YAML.

ConfigLoader → deep_merge(base, env) → ConfigValidator → ConfigRegistry (singleton)

↕

ConfigWatcher (threading.Thread)

polls mtime every N seconds

→ triggers re-load on change

Secrets (API keys) are injected exclusively through environment variables. They appear in the dataclass but never in any YAML file. A misconfigured .gitignore cannot leak your trading credentials.

4. Implementation Deep Dive

4.1 The Config Dataclass: Zero Magic, Full Validation

We use Python 3.11 dataclasses with __post_init__ validation rather than Pydantic. This is intentional: you need to understand what validation does, not just that it runs.

@dataclass

class RiskConfig:

max_position_usd: float

risk_per_trade: float

max_daily_drawdown: float

max_open_positions: int

def __post_init__(self):

if not 0 < self.risk_per_trade < 0.1:

raise ConfigValidationError(

f"risk_per_trade={self.risk_per_trade} outside safe bounds (0, 0.1). "

f"This is a trading system, not a calculator."

)

if self.max_daily_drawdown <= 0 or self.max_daily_drawdown >= 1.0:

raise ConfigValidationError(

f"max_daily_drawdown must be in (0.0, 1.0), got {self.max_daily_drawdown}"

)

__post_init__ fires after __init__ populates all fields. You get Python’s type system enforcing the struct layout AND your domain logic enforcing the business rules. If validation fails during a hot-reload, the current valid config stays active. The system logs the error and continues. It does not restart. It does not crash. This is the difference between a warning and an outage.

4.2 The Deep Merge: Why dict.update() Kills You

Standard dict.update() does a shallow merge. This means:

base = {'risk': {'max_pos': 100, 'drawdown': 0.05}}

env = {'risk': {'max_pos': 500}}

base.update(env)

# Result: {'risk': {'max_pos': 500}} ← drawdown GONE

Your base config’s drawdown guard vanishes silently. We implement a recursive deep_merge:

def deep_merge(base: dict, override: dict) -> dict:

result = base.copy()

for key, value in override.items():

if key in result and isinstance(result[key], dict) and isinstance(value, dict):

result[key] = deep_merge(result[key], value)

else:

result[key] = value

return result

Every nested key is preserved unless explicitly overridden. This is O(n) on config size — which is measured in bytes, not the concern.

4.3 The ConfigWatcher: Polling vs. inotify

We use polling with os.path.getmtime() rather than inotify/watchdog. Why? inotify is Linux-only, depends on file descriptor limits, and introduces an external dependency. A trading system’s config watcher needs to work identically across development (macOS), staging (Linux), and potentially containerized environments where filesystem event propagation is unreliable.

class ConfigWatcher(threading.Thread):

def __init__(self, paths: list[Path], callback: Callable, interval_s: float = 2.0):

super().__init__(daemon=True) # dies with the main process

self._paths = paths

self._callback = callback

self._interval = interval_s

self._mtimes: dict[Path, float] = {}

self._stop_event = threading.Event()

def run(self):

# Initialize baseline mtimes

for p in self._paths:

self._mtimes[p] = p.stat().st_mtime if p.exists() else 0.0

while not self._stop_event.wait(self._interval):

for p in self._paths:

current = p.stat().st_mtime if p.exists() else 0.0

if current != self._mtimes[p]:

self._mtimes[p] = current

self._callback(p) # re-load on change

Why

daemon=Truematters: Daemon threads die automatically when the main process exits. Without it, your watcher thread keeps the process alive after aCtrl+C, and you’re back tokill -9debugging.

4.4 The ConfigRegistry: Thread-Safe Singleton

The registry is the single source of truth for config access. It uses threading.Lock to protect against TOCTOU (time-of-check-to-time-of-use) races during hot-reload:

class ConfigRegistry:

_instance: "ConfigRegistry | None" = None

_lock: threading.Lock = threading.Lock()

def __new__(cls) -> "ConfigRegistry":

with cls._lock:

if cls._instance is None:

cls._instance = super().__new__(cls)

return cls._instance

def get(self) -> TradingConfig:

with self._lock:

if self._config is None:

raise RuntimeError("ConfigRegistry not initialized. Call load() first.")

return self._config

def reload(self, changed_path: Path) -> None:

try:

new_config = self._loader.load()

with self._lock:

self._config = new_config

logger.info(f"Config hot-reloaded from {changed_path}")

except ConfigValidationError as e:

logger.error(f"Hot-reload REJECTED: {e}. Keeping active config.")

The double-checked locking pattern with __new__ ensures only one registry instance exists across all threads. The reload path holds the lock only for the assignment — never for the I/O. The disk read happens outside the lock, so the event loop is never blocked waiting for a filesystem operation.

5. System Diagrams

The three diagrams below show: (1) how the components are wired together, (2) the step-by-step path from file read to hot-reload, and (3) every state the ConfigRegistry can be in during its lifetime.